Meta unveils the AI Research SuperCluster — a cutting-edge AI supercomputer for AI research.

Developing the next generation of advanced AI will require powerful new computers capable of quintillions of operations per second. Today, Meta is announcing a newly designed AI Research SuperCluster (RSC) — which is among the fastest AI supercomputers running today and will be the fastest AI supercomputer in the world when it’s fully built by mid-2022. Our researchers have already started using RSC to train large models in natural language processing (NLP) and computer vision for research, with the aim of one-day training models with trillions of parameters.

RSC will help Meta’s AI researchers build new and better AI models that can learn from trillions of examples; work across hundreds of different languages; seamlessly analyze text, images, and video together; develop new augmented reality tools; and much more. Researchers will be able to train the largest models needed to develop advanced AI for computer vision, NLP, speech recognition, and more. RSC will help us build entirely new AI systems that can, for example, power real-time voice translations to large groups of people, each speaking a different language, so they can seamlessly collaborate on a research project or play an AR game together. Ultimately, the work done with RSC will pave the way toward building technologies for the next major computing platform — the metaverse, where AI-driven applications and products will play an important role.

Why do we need an AI supercomputer at this scale?

Meta has been committed to long-term investment in AI since 2013 since Facebook AI Research lab came into action. In recent years, it has made significant strides in AI while leading in a number of areas, including self-supervised learning, where algorithms can learn from vast numbers of unlabeled examples, and transformers, which allow AI models to reason more effectively by focusing on certain areas of their input.

To fully realize the benefits of self-supervised learning and transformer-based models, various domains, whether vision, speech, language, or for critical use cases like identifying harmful content, will require training increasingly large, complex, and adaptable models. Computer vision, for example, needs to process larger, longer videos with higher data sampling rates. Speech recognition needs to work well even in challenging scenarios with lots of background noise, such as parties or concerts. NLP needs to understand more languages, dialects, and accents. And advances in other areas, including robotics, embodied AI, and multimodal AI will help people accomplish useful tasks in the real world.

High-performance computing infrastructure is a critical component in training such large models, and Meta’s AI research team has been building these high-powered systems for many years. The first generation of this infrastructure, designed in 2017, has 22,000 NVIDIA V100 Tensor Core GPUs in a single cluster that performs 35,000 training jobs a day. Up until now, this infrastructure has set the bar for Meta’s researchers in terms of its performance, reliability, and productivity.

In early 2020, Meta decided the best way to accelerate progress was to design a new computing infrastructure from a clean slate to take advantage of new GPU and network fabric technology. This infrastructure to be able to train models with more than a trillion parameters on data sets as large as an exabyte — which, to provide a sense of scale, is the equivalent of 36,000 years of high-quality video.

While the high-performance computing community has been tackling scale for decades, all the needed security and privacy controls were ensured to stay in place to protect any training data was being used. Unlike with our previous AI research infrastructure, which leveraged only open source and other publicly available data sets, RSC also helps us ensure that our research translates effectively into practice by allowing us to include real-world examples from Meta’s production systems in model training. By doing this, we can help advance research to perform downstream tasks such as identifying harmful content on our platforms as well as research into embodied AI and multimodal AI to help improve user experiences on our family of apps. We believe this is the first time performance, reliability, security, and privacy have been tackled at such a scale.

RSC: Under the hood

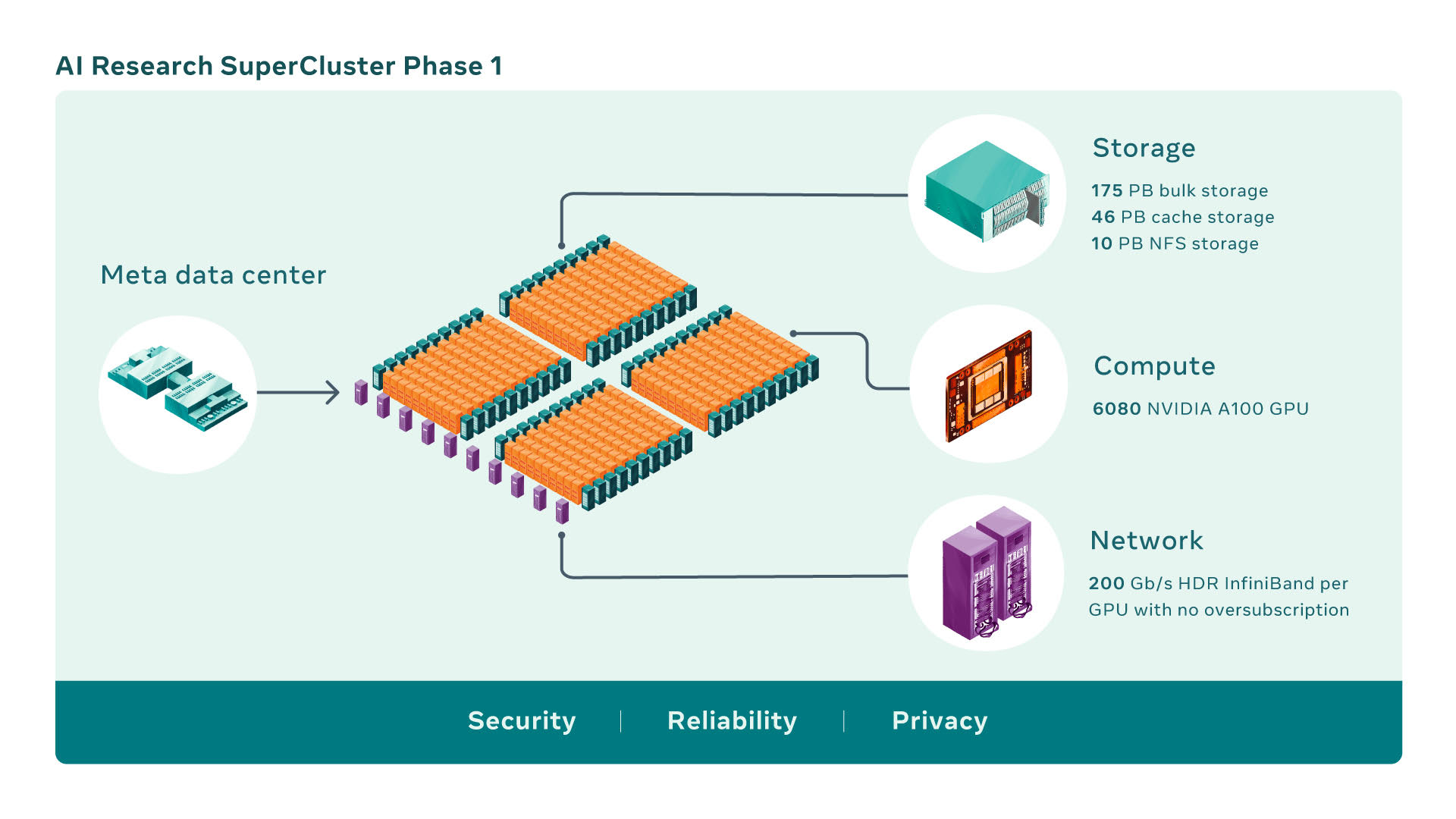

AI supercomputers are built by combining multiple GPUs into compute nodes, which are then connected by a high-performance network fabric to allow fast communication between those GPUs. RSC today comprises a total of 760 NVIDIA DGX A100 systems as its compute nodes, for a total of 6,080 GPUs — with each A100 GPU being more powerful than the V100 used in our previous system. Each DGX communicates via an NVIDIA Quantum 1600 Gb/s InfiniBand two-level Clos fabric that has no oversubscription. RSC’s storage tier has 175 petabytes of Pure Storage FlashArray, 46 petabytes of cache storage in Penguin Computing Altus systems, and 10 petabytes of Pure Storage FlashBlade.

Early benchmarks on RSC, compared with Meta’s legacy production and research infrastructure, have shown that it runs computer vision workflows up to 20 times faster, runs the NVIDIA Collective Communication Library (NCCL) more than nine times faster, and trains large-scale NLP models three times faster. That means a model with tens of billions of parameters can finish training in three weeks, compared with nine weeks before.

Building an AI supercomputer

Building an AI supercomputer

Designing and building something like RSC isn’t a matter of performance alone but performance at the largest scale possible, with the most advanced technology available today. When RSC is complete, the InfiniBand network fabric will connect 16,000 GPUs as endpoints, making it one of the largest such networks deployed to date. Additionally, the design includes a caching and storage system that can serve 16 TB/s of training data, and Meta plans to scale it up to 1 exabyte.

All this infrastructure must be extremely reliable, an estimate of some experiments could run for weeks and require thousands of GPUs. Lastly, the entire experience of using RSC has to be researcher-friendly so our teams can easily explore a wide range of AI models.

Penguin Computing, an SGH company, Meta's architecture, and managed services partner, worked with the operations team on hardware integration to deploy the cluster and helped set up major parts of the control plane. Pure Storage provided us with a robust and scalable storage solution. NVIDIA provided us with its AI computing technologies featuring cutting-edge systems, GPUs, InfiniBand fabric, and software stack components like NCCL for the cluster.

Doing it remotely, during a pandemic

But there were other unexpected challenges that arose in RSC’s development — namely the coronavirus pandemic. RSC began as a completely remote project that the team took from a simple shared document to a functioning cluster in about a year and a half. COVID-19 and industry-wide wafer supply constraints also brought supply chain issues that made it difficult to get everything from chips to components like optics and GPUs, and even construction materials — all of which had to be transported in accordance with new safety protocols. The cluster was designed from scratch, creating many entirely new Meta-specific conventions and rethinking previous ones along the way.

Beyond the core system itself, there was also a need for a powerful storage solution, one that can serve terabytes of bandwidth from an exabyte-scale storage system. To serve AI training’s growing bandwidth and capacity needs, a storage service was developed, AI Research Store (AIRStore), from the ground up. To optimize for AI models, AIRStore utilizes a new data preparation phase that preprocesses the data set to be used for training. Once the preparation is performed one time, the prepared data set can be used for multiple training runs until it expires. AIRStore also optimizes data transfers so that cross-region traffic on Meta’s inter-datacenter backbone is minimized.

How Meta safeguard's data in RSC

To build new AI models that benefit the people using Meta's services — whether that’s detecting harmful content or creating new AR experiences — models need to learn to use real-world data from our production systems. RSC has been designed from the ground up with privacy and security in mind so that Meta’s researchers can safely train models using encrypted user-generated data that is not decrypted until right before training. For example, RSC is isolated from the larger internet, with no direct inbound or outbound connections, and traffic can flow only from Meta’s production data centers.

To meet the privacy and security requirements, the entire data path from our storage systems to the GPUs is end-to-end encrypted and has the necessary tools and processes to verify that these requirements are met at all times. Before data is imported to RSC, it must go through a privacy review process to confirm it has been correctly anonymized. The data is then encrypted before it can be used to train AI models and decryption keys are deleted regularly to ensure older data is not still accessible. And since the data is only decrypted at one endpoint, in memory, it is safeguarded even in the unlikely event of a physical breach of the facility.

Phase two and beyond

RSC is up and running today, but its development is ongoing. Once phase two of building out RSC is completed, there is a probability that it will become the fastest AI supercomputer in the world, performing at nearly 5 exaflops of mixed-precision compute. By 2022, Meta will work to increase the number of GPUs from 6,080 to 16,000, which will increase AI training performance by more than 2.5x. The InfiniBand fabric will expand to support 16,000 ports in a two-layer topology with no oversubscription. The storage system will have a target delivery bandwidth of 16 TB/s and exabyte-scale capacity to meet increased demand.

Metaverse expects such a step function change in compute capability to enable us not only to create more accurate AI models for the existing services but also to enable completely new user experiences, especially in the metaverse. Our long-term investments in self-supervised learning and in building next-generation AI infrastructure with RSC are helping us create the foundational technologies that will power the metaverse and advance the broader AI community as well.