Search and discovery solution Algolia shares insights in their new guide on why media brands need to get behind voice search.

The way consumers discover their next online experience is as important as what they’re looking for in the first place. Recent research shows that 71% of people prefer to search through voice — not by keyboard. For media companies, this raises the essential question: Is their search voice ready? For consumers, conversational interfaces such as voice are the first opportunity they have to be in control of what they want to do.

Voice for media — why now?

Multiple research data points to the positive trend in voice interactions. eMarketer expects over 40% of the Internet-connected US population to own smart speakers by 2021. 80% of smartphone owners under the age of 30 use a voice assistant. Voice is already here and it's making an impact.

Users want to be in control

For consumers, conversational interfaces such as voice are the first opportunity they have to be in control of what they want to do. On the average website or mobile app, even if the UX tries to accommodate users, they are ultimately dictating a very specific means of interaction. It’s the same with traditional site search. Often, users approach search by trying to figure out what words and phrases the people who created the content used. The situation is even worse for consumers who have a very specific need - the consumers who you most want to be able to find their content.

This often leads to multiple revisions of the search query, or even abandoning the search altogether. Voice reverses this expectation. Consumers who have become used to Amazon Alexa and Google Assistant now expect to be able to speak similarly to how they would speak to a human. It doesn’t matter where they are, or what platform they’re on. If they’re speaking out loud, they want to be understood.

Media is the number one content consumed on smart speakers

Media companies in particular are well-positioned for taking advantage of voice and voice search. If your company deals in audio, then you should know that 17% of all audio listening at home happens on smart speakers, are further 31% on mobile devices. This means that around half of audio listening happens on devices with voice input. By its very nature, audio on these devices is tailored for voice interactions.

Just as important is the growth of the “hearable” market led by Amazon, Google, and Apple. Amazon’s Echo Buds and Apple’s Airpods give listeners an always-available smart assistant, even away from the home or the car. Amazon and Google are further expanding their reach through partnerships with headphone manufacturers. What we see here is the growth of “ambient voice” interactions that likewise expand where media consumption is happening.

While voice interactions are growing, voice is utterly and naturally dominated by media. Yes, certainly voice is useful for the smart home, and consumers are searching for information about locations and products on voice. But still, listening to music and podcasts, pulling up television programs, or listening to audiobooks are all the primary interactions people turn to on smart speakers. Watch anyone who gets a smart speaker for the first time and see what they do first. They’ll almost certainly want to begin consuming media.

Why voice for media now?

Consumers believe that apps should meet their way of speaking. Media companies have the challenge of building experiences that users enjoy, find easy to use, and thus promote repeat usage. This is, at its core, a search and discovery problem.

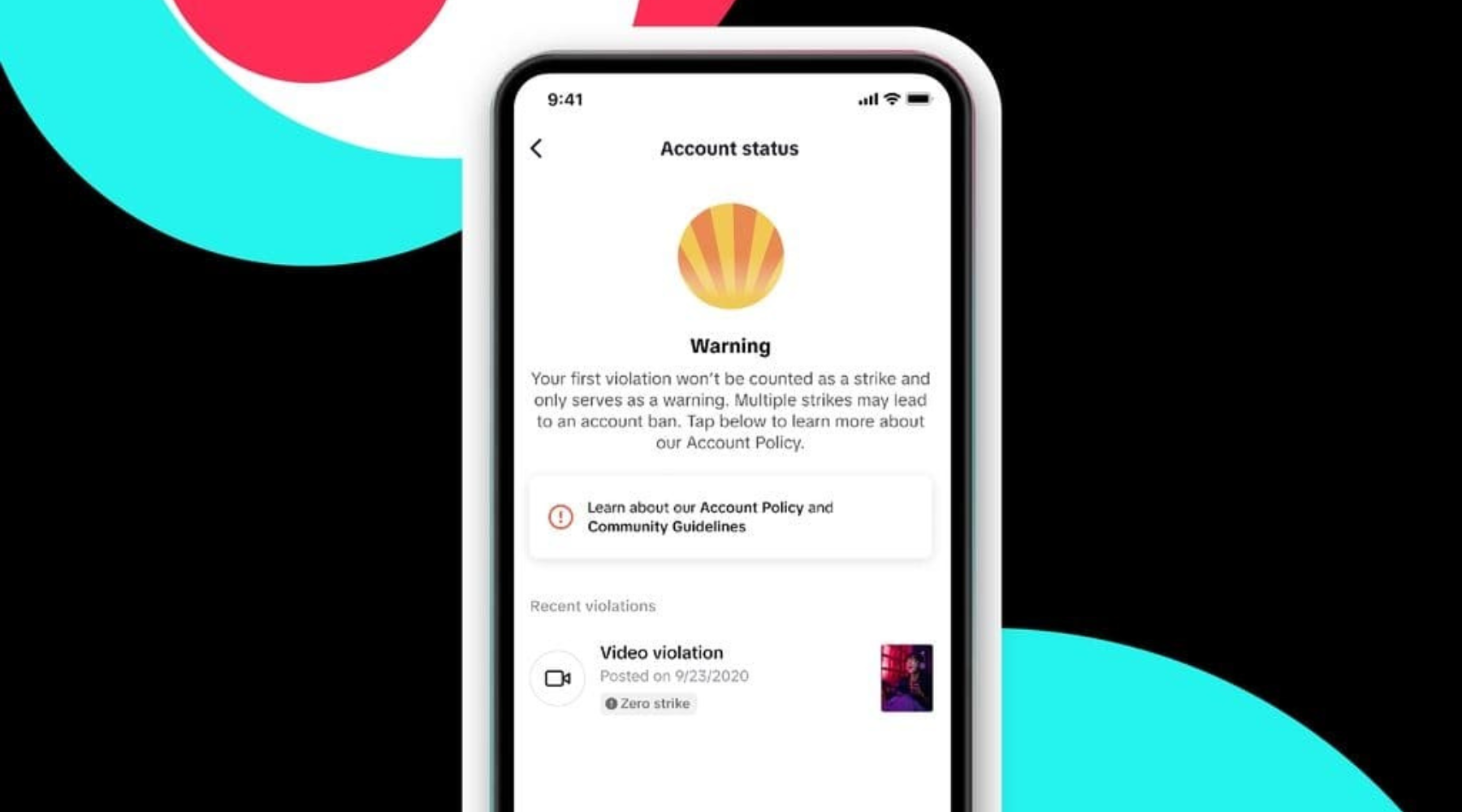

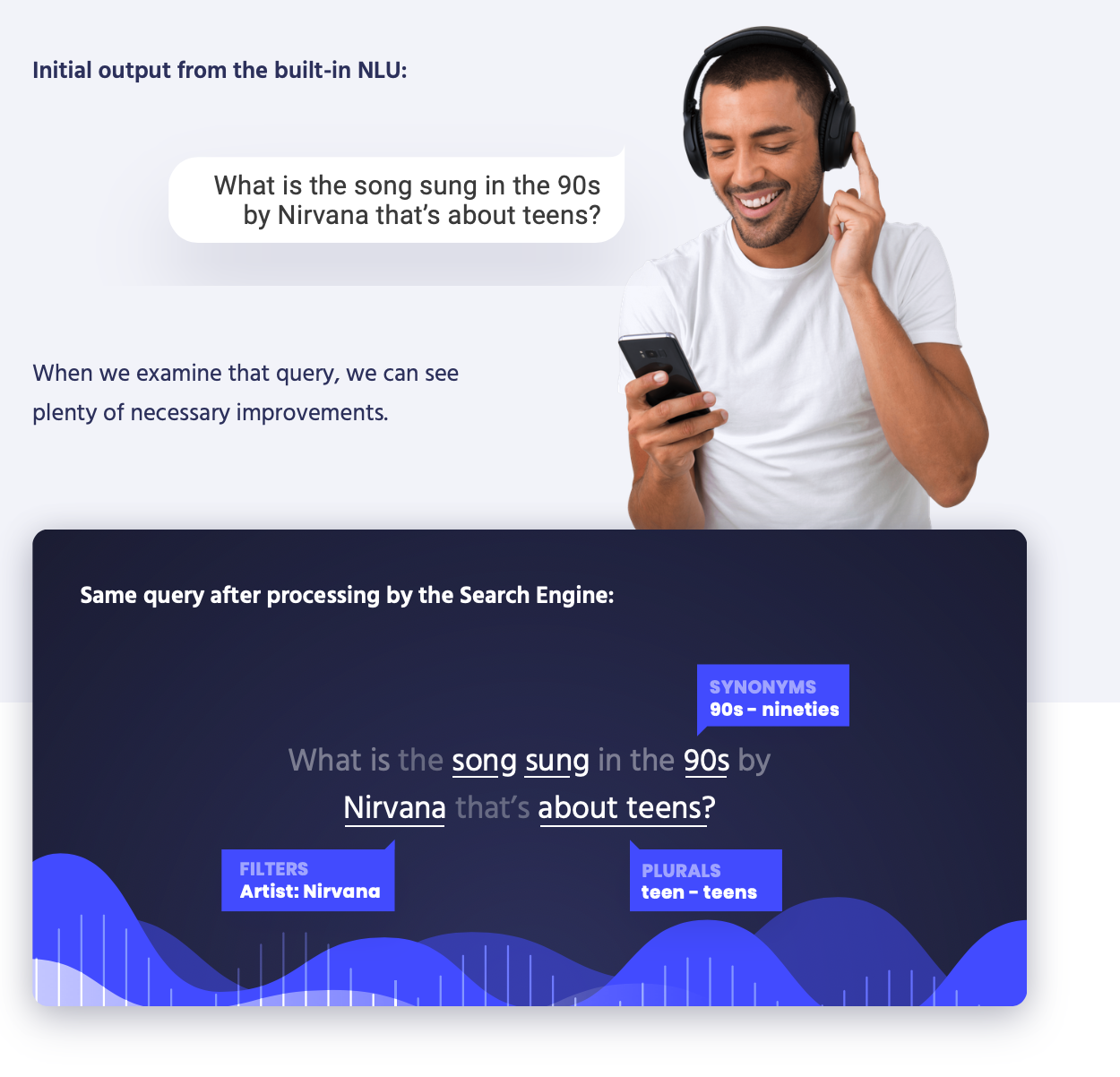

Voice-first applications, like those on Alexa and Google Assistant, need search backing because the built-in natural language understanding (NLU) is not always sufficient for matching requests to content. The NLU can determine intents (what a user wants to do) and entities (what “items” the user wants to specify such as show names or season numbers), but these can be rather rigid.

Search will improve the experience because a viewer can speak more naturally and a search engine will use filtering and full-text search to return the right result. This way, a query including a bit of the episode title, a piece of the show title, and some of the description will match a result.

NLU searches require full-text search and filtering

The combination of filtering and full-text search is important. Filtering alone, as most voice applications do through entity values, is unnecessarily strict. Full-text search, meanwhile, is unnecessarily broad. Filters help us to limit our search to what we know is correct, and then full- text search lets the user be expansive in their requests.

Personalization builds relationships

Personalization is also an opportunity to improve voice search. It helps create a real relationship through conversation. It’s the relationship building that presents the greatest opportunity for media companies. Voice applications provide you the chance to be with consumers wherever they are, whenever they think about you. With voice applications that truly work, your brand is no more than a spoken request away at all times.

Qualities of good voice experiences

Whether an app is voice-first or voice is simply one part of a larger interaction model, every good voice experience will do two things above all else. First, it will match a user to information quicker than typing or tapping. Second, it will anticipate user requests.

The quickest way to match requests to information is to reduce the corpus being searched by first applying filters, and then getting exact through full-text search. How can one do this? Well, one method is by combining search with an NLU platform that will detect intents. In cases of a mobile app, where search is the only intent, search can stand on its own because users won’t ask for help or to return to previous results--requests that are best handled by NLU

You app also needs to have the capability to automatically filter down or boost results based on specific attributes. Voice searchers are significantly more likely to filter through the query itself. On smart speakers, of course, they have no other choice. But even on mobile, they will generally prefer to speak their filters. That’s why it’s so useful to have search that will automatically detect filter values in a request and apply them to the query.

The personalization layer can help ease the process of discovering content. Users no longer need to introduce their interests with every query. Instead, they can simply say what’s relevant at that time. For example, if the platform knows that the user prefers short podcasts, our personalization can filter to boost those under 15 minutes long. Isn't that much easier than asking a full-fledged query?

Voice search is growing at an immense pace. It’s growing through more and more companies implementing voice. It’s growing through better tooling and new platforms. And it’s growing through increased expectations on the part of users. Media companies who continue to put off voice will fall behind, and companies who implement voice poorly will have disappointed customers who don’t give second chances easily. Voice search, not only improves but often makes or breaks the modern user experience. Media companies must get on board.