Under the new system, offending accounts will accrue strikes as the content is removed.

TikTok announced an updated system for account enforcement. The platform's Community Guidelines establish and explain the behaviors and content that it allows or not. When people violate these policies, TikTok takes action.

The recent update to the account enforcement system aims to better act against repeat offenders and to help TikTok more efficiently and quickly remove harmful accounts, while promoting a clearer and more consistent experience for the vast majority of creators who want to follow policies.

Why TikTok updated the current account enforcement system

The existing account enforcement system leverages different types of restrictions, like temporary bans from posting or commenting, to prevent abuse of product features while teaching people about the policies in order to reduce future violations.

While this approach has been effective in reducing harmful content overall, TikTok heard from creators that it can be confusing to navigate. It can disproportionately impact creators who rarely and unknowingly violate a policy, while potentially being less efficient at deterring those who repeatedly violate them. Repeat violators tend to follow a pattern – analysis by TikTok has found that almost 90% violate using the same feature consistently, and over 75% violate the same policy category repeatedly.

How the streamlined account enforcement system will work

Under the new system, if someone posts content that violates one of TikTok's Community Guidelines, the account will accrue a strike as the content is removed. If an account meets the threshold of strikes within either a product feature (i.e. comments, Live) or policy (i.e. Bullying and Harassment), it will be permanently banned. Those policy thresholds can vary depending on a violation's potential to cause harm to community members – for example, there may be a stricter threshold for violating TikTok policy against promoting hateful ideologies than for sharing low-harm spam.

TikTok will continue to issue permanent bans on the first strike for severe violations, including promoting or threatening violence, showing or facilitating child sexual abuse material (CSAM), or showing real-world violence or torture. As an additional safeguard, accounts that accrue a high number of cumulative strikes across policies and features will also be permanently banned. Strikes will expire from an account's record after 90 days.

Helping creators understand their account status

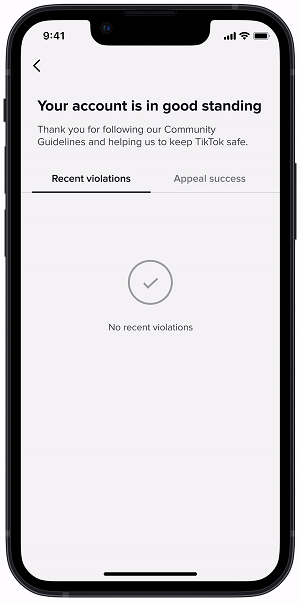

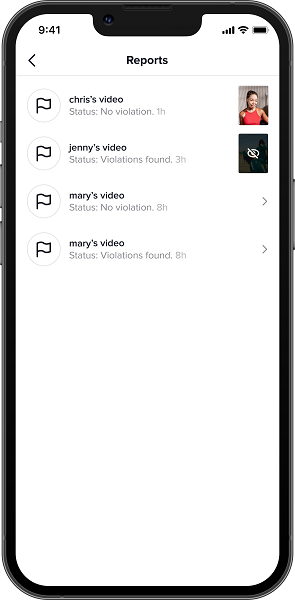

These changes are intended to drive more transparency around TikTok's enforcement decisions and help the community better understand how to follow the Community Guidelines. To further support creators, TikTok will roll out new features in the Safety Center provided to creators in-app in the coming weeks. These include an "Account status" page where creators can easily view the standing of their account, and a "Report records" page where creators can see the status of reports they've made on other content or accounts. These new tools add to the notifications creators already receive if they've violated policies, and support creators' ability to appeal enforcements and have strikes removed if valid. TikTok will also begin notifying creators if they're on the verge of having their account permanently removed.

Making consistent, transparent moderation decisions

As a separate step toward improving transparency about moderation practices at the content level, TikTok is beginning to test a new feature in some markets that would provide creators with information about which of their videos have been marked as ineligible for recommendation to the For You feed, let them know why, and give them the opportunity to appeal.

The updated account enforcement system is currently rolling out globally and TikTok will notify all community members as this new system becomes available to them. TikTok will continue evolving and sharing progress around the processes used to evaluate accounts.